Zentara’s unified communications platform requires processing diverse, high-volume workloads, including REST APIs, video streams, and real-time gaming telemetry. Relying on a monolithic load balancing strategy creates inherent bottlenecks.

Therefore, optimizing our infrastructure demands a tiered routing architecture. By decoupling traffic management across OSI Layer 4 and Layer 7, we maximize throughput, enforce strict security perimeters, and ensure seamless scalability for global clients.

To balance routing intelligence with raw performance, we deploy a hybrid load balancing architecture. The Application Load Balancer (ALB) operates at Layer 7, terminating TCP connections to perform deep packet inspection of HTTP payloads, which introduces a ~400ms latency overhead necessary for complex routing.

Conversely, the Network Load Balancer (NLB) operates at Layer 4, evaluating a 5-tuple flow hash (protocol, source/destination IP, and ports) to push raw data at microsecond speeds (~100μs latency). By positioning the NLB at the VPC perimeter to forward TCP traffic to internal ALBs via private IP addresses, we maintain fixed IP boundaries for partner allow-lists while isolating our application-aware routing.

Comparison Matrix

| Architecture Dimension | Application Load Balancer | Network Load Balancer |

| OSI Layer | Layer 7 (Application) | Layer 4 (Transport) |

| Supported Protocols | HTTP/1.1, HTTP/2, gRPC, WebSockets | TCP, UDP, TLS, QUIC |

| Default Algorithm | Round-Robin / Least Outstanding | Flow Hash (5-tuple) |

| Target Types | EC2, ECS, IP Addresses, Lambda | EC2, ECS, IP Addresses, ALB |

| IP Addressing | Dynamic DNS resolution only | Static IP & Elastic IP supported |

| Security Features | WAF, OIDC, JWT, mTLS verification | TLS Passthrough, Security Groups |

| Latency Profile | ~400 ms (Proxy processing) | ~100μs (Direct routing) |

We deploy Network Load Balancers at the VPC edge using AWS Hyperplane to handle instantaneous request spikes without the need for manual pre-warming. The NLB bypasses payload inspection entirely, utilizing a Flow Hash algorithm based on the 5-tuple alongside TCP sequence numbers. This transport-layer optimization provides strict connection integrity, microsecond-level latency, and the static availability zone IPs required for strict enterprise firewall configurations.

To address connection drops during mobile network handoffs (e.g., Wi-Fi to cellular), we configure the NLB to pass through the UDP-based QUIC protocol. Instead of relying on volatile client IP addresses for session stickiness, the NLB parses an 8-byte Server ID embedded directly within the plaintext QUIC Connection ID. This mechanism allows the load balancer to route packets to the original backend instance despite client IP mutations, eliminating the need for repeated TLS handshakes and preserving connection state natively.

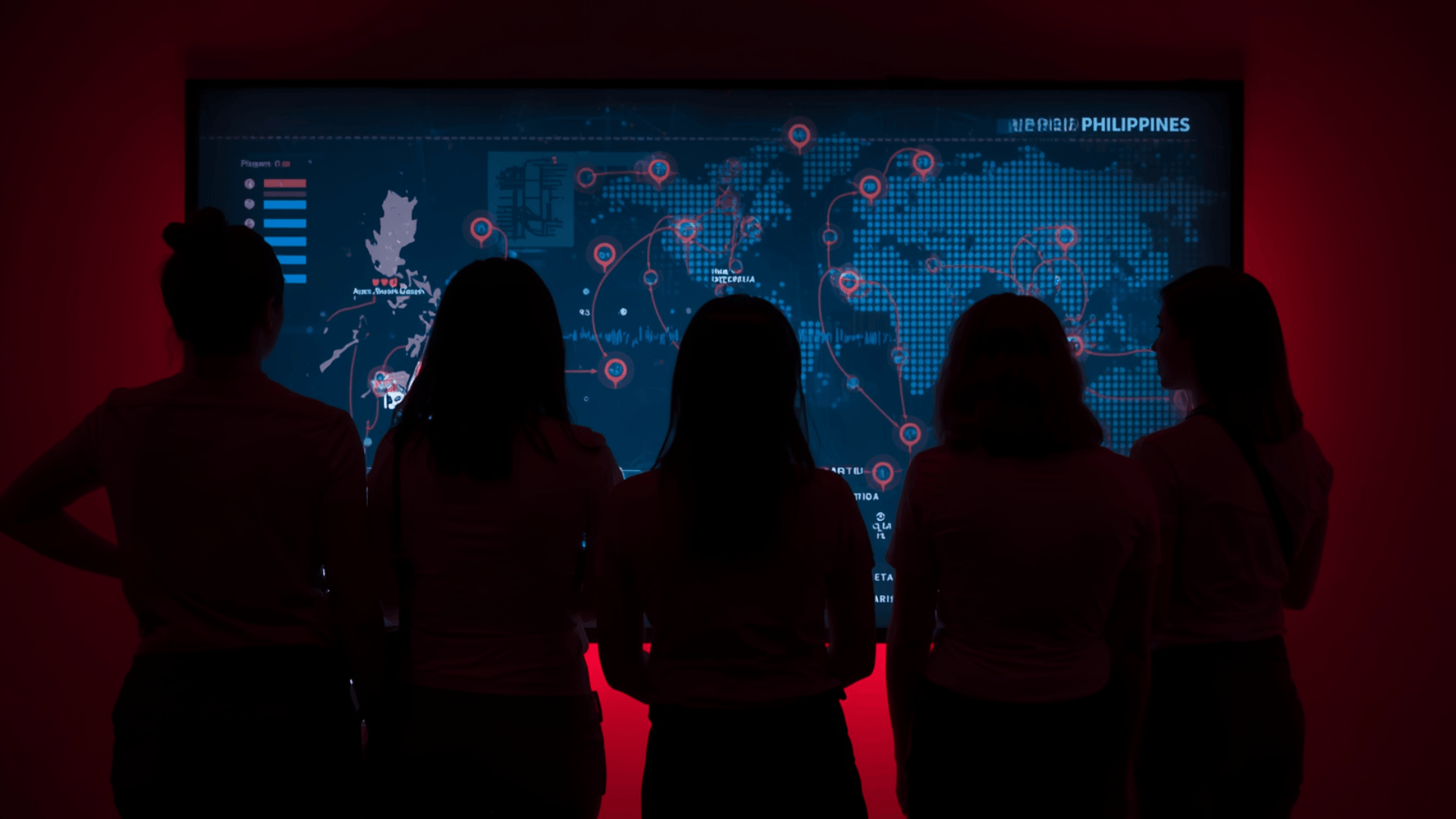

Internal Application Load Balancers manage our complex microservices routing and security enforcement. The ALB intercepts incoming requests to execute native JSON Web Token (JWT) signature verification via Amazon Cognito before forwarding traffic to the backend targets. Additionally, for strict service-to-service communication, the ALB enforces Mutual TLS (mTLS) in Verify Mode, evaluating client certificates against a centralized Certificate Revocation List (CRL) within our trust store to guarantee a zero-trust boundary.

Segregating routing responsibilities across the OSI stack yielded immediate performance and economic gains. Implementing QUIC passthrough at Layer 4 eradicated TCP handshake overhead during network roaming, reducing mobile application latency by 30%. Furthermore, shifting long-lived TCP flows to the NLB that handles 100,000 active connections per capacity unit compared to the ALB’s 3,000 that optimized our infrastructure utilization, resulting in a 40% reduction in monthly Elastic Load Balancing expenditures while maintaining high availability.