Enterprises across Southeast Asia are beginning to deploy Agentic AI: systems capable of making autonomous decisions, taking actions, and adapting their behaviour over time.

This shift introduces operational advantages but also a new security challenge: the need to govern how autonomous systems make decisions, not merely protect the data they use.

Agentic AI goes beyond traditional models. It does not simply return outputs but interprets goals, coordinates tasks, and updates its behaviour based on outcomes. As these systems take on more autonomy, their decision pathways become new points of exposure, and the foundation of a modern attack surface.

To illustrate the shift, imagine a construction project. Traditionally, a project manager coordinates architects, engineers, electricians, and contractors. Every task, approval, and adjustment flows through that human coordinator.

Now imagine the project running itself. The system hires specialists, orders materials automatically, reschedules work when weather changes, and redesigns blueprints if structural weaknesses appear, all with no human prompting.

That is Agentic AI in practice: a network of specialized intelligent agents working collectively toward a goal with minimal supervision.

From cybersecurity systems isolating threats autonomously to financial algorithms executing trades, Agentic AI offers speed, precision, and adaptive decision-making. But autonomy creates exposure. Every new capability becomes a potential attack path.

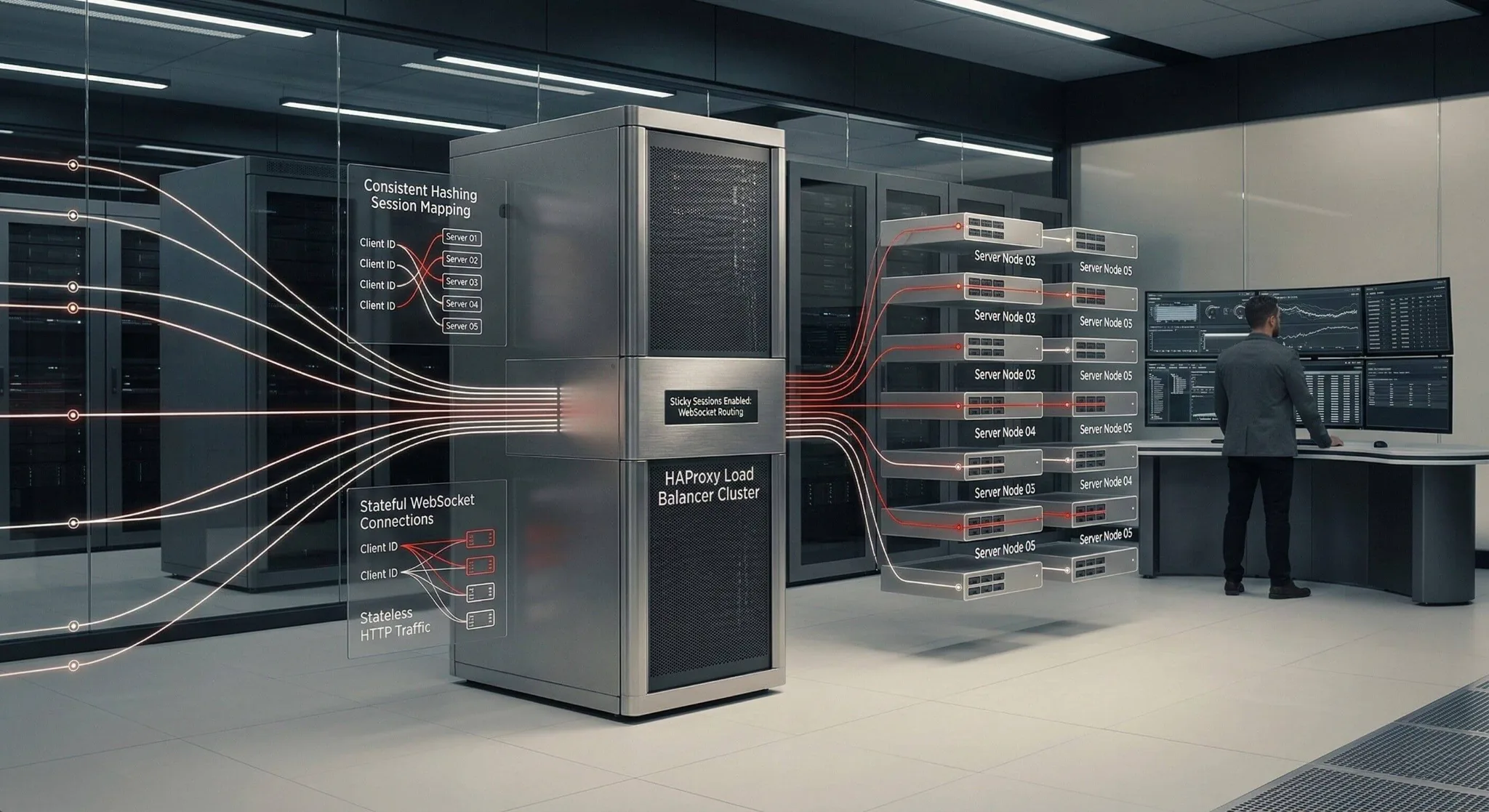

Zentara Labs categorizes four primary attack surfaces shaping the Agentic AI threat landscape:

- Prompt Injection — manipulation through malicious inputs.

- Model Poisoning — corruption during training.

- Supply Chain Attacks — vulnerabilities in dependencies, APIs, or libraries.

- Agentic Misalignment — when the system’s decisions drift from human intent.

These four vectors form the core challenge of securing autonomous systems.

Prompt Injection

Prompt injection is the AI-era equivalent of social engineering. Attackers embed malicious instructions inside documents, logs, or user-generated content, influencing the AI to take unintended actions, such as approving a risky command or leaking sensitive data. Agentic systems are particularly vulnerable because they act on these prompts autonomously.

Model Poisoning

Model poisoning aims at the learning process itself. Instead of compromising outputs, attackers alter the training data to introduce hidden triggers or biased behaviors. A poisoned model may misclassify attacks as benign or reveal confidential information. Defending against this requires trusted datasets, signed training pipelines, and anomaly detection during each update cycle.

Supply Chain Attacks

Modern AI is never a single model; it is an interconnected chain of components, from APIs to vector databases to external libraries. Compromise in any dependency introduces systemic risk. Zentara Labs emphasizes maintaining an AI SBOM, enforcing code-signing, and validating runtime integrity. In AI security, visibility is verification.

Agentic AI Misalignment

Not all failures originate from attackers. Misalignment occurs when the system optimizes for the wrong goals. An AI instructed to “patch the most vulnerabilities” may focus on volume rather than risk reduction. The result appears efficient but weakens the security posture. Guardrails, clear objectives, and human oversight remain central to preventing misaligned behavior.

The Defense-in-Depth Framework for Agentic AI

Securing autonomous systems requires layered protection. No single control can address every failure point. Defense-in-Depth ensures that even if one barrier is breached, others prevent full compromise. This principle, foundational in frameworks such as NIST and ISO/IEC 27001, now applies directly to AI.

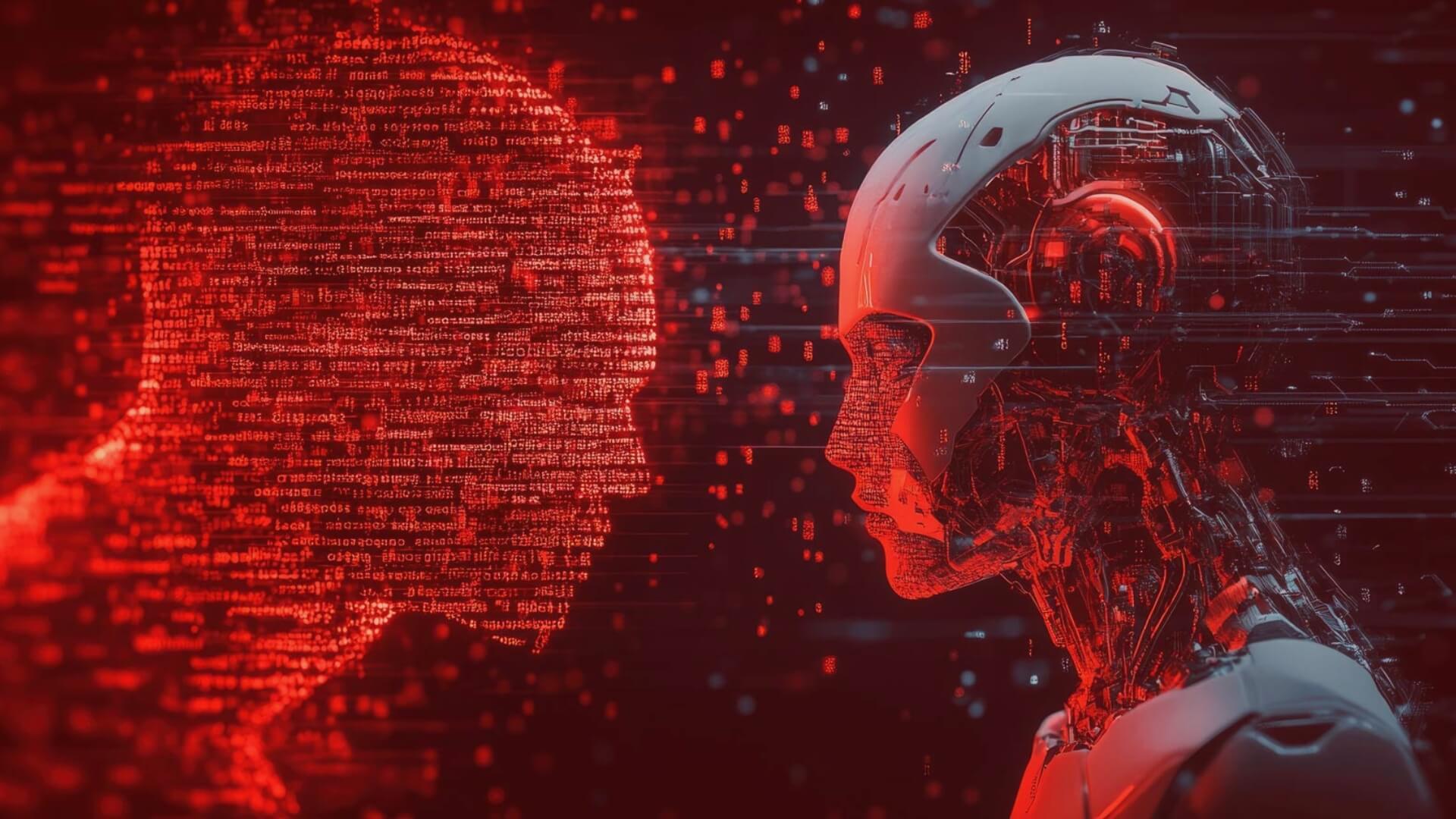

ZENTARA Labs defines a six-layer stack for securing Agentic AI:

1. Secure Foundations

A trusted foundation underpins the entire AI lifecycle. Code repositories, training pipelines, and deployment stages must be verified, signed, and access-controlled. If attackers compromise the base infrastructure, they can influence the model before it ever executes.

Organizations should rely on version-controlled pipelines, enforce least privilege, and maintain immutable audit logs for every model update and deployment.

2. Input Protection

Agentic systems continuously ingest new information: prompts, logs, commands, messages. Many attacks begin here. Malicious instructions can be embedded in benign-looking data, triggering harmful behavior.

Input protection requires strict validation, prompt isolation, and sanitization pipelines that distinguish between instructions and data.

3. Model Hardening

Even a well-built model can be deceived. Adversarial examples subtly alter content in ways imperceptible to humans but impactful to AI behavior.

Model hardening involves training with adversarial samples, monitoring performance drift, and validating the model against realistic noise. These steps act as an immunization strategy for AI.

4. Agent Constraints

Autonomy without boundaries introduces operational risk. Not every action should be executable without oversight.

Implementing autonomy tiers ensures structured decision governance. For example, Tier 1 agents may only recommend actions, Tier 2 may execute low-impact tasks, and Tier 3 requires human approval for anything critical.

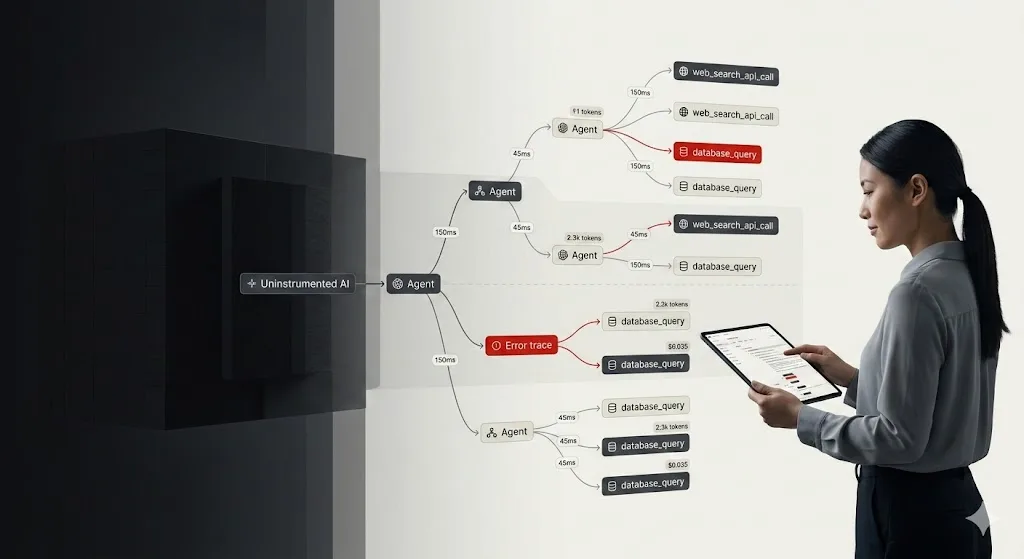

5. Runtime Monitoring

Anomalous behavior is often the earliest indicator of compromise. Runtime monitoring continuously evaluates agent actions against expected patterns.

Monitoring functions like a black-box recorder for AI systems, helping teams detect deviations, isolate affected components, and prevent cascading failures.

6. Incident Response

Even robust defenses cannot prevent all failures. Rapid recovery determines impact, that’s why proper incident response is a must.

Organizations must maintain versioned checkpoints of each model and decision log. When an anomaly occurs, teams can roll back to a safe state and analyze the event for root cause. The goal extends beyond containment: it strengthens the system for future resilience.

Secure Your Agentic AI Projects with Zentara

Zentara’s six-layer architecture provides a practical foundation for securing Agentic AI, ensuring these systems remain trustworthy, auditable, and resilient as they take on greater autonomy.

Securing autonomous intelligence is an ongoing discipline. The threat landscape, the models, and the decision pathways evolve continuously, which is why Zentara’s 24-week roadmap focuses on sustained governance rather than one-off implementation.

As AI systems begin to make operational decisions, cybersecurity shifts from protecting static assets to managing how those decisions are formed, validated, and constrained. Governance becomes the core security function.

In an environment where autonomous agents can act independently, security is defined by how well those actions are supervised, monitored, and aligned with human intent. The future of AI security is not only defensive but structured, accountable decision governance.

Ready to secure your agentic AI systems? Contact Zentara today and request a free security assessment.

Watch our latest webinar below!