AI adoption is accelerating across industries. From customer support automation to internal analytics and decision-making, organisations are embedding AI into critical workflows at speed. The promise is clear: faster insights, improved efficiency, and competitive advantage. What is less visible is the risk.

As AI systems become integrated into daily operations, they introduce AI security risks that do not fit neatly into traditional models. Unlike conventional applications, AI systems interact with large volumes of sensitive data and can be accessed in ways that bypass established controls. The real question is not whether AI is being used, but whether it is being used securely.

Why AI Introduces a Different Kind of Risk

AI systems change how data is accessed, processed, and exposed, creating a fundamentally different risk profile compared to traditional software. These AI security risks often emerge through:

Less visible data flows

AI systems often rely on multiple layers, including APIs, external models, and third-party platforms. Data may move across environments that are not fully controlled or monitored by the organisation. This reduces visibility into where sensitive information is stored, processed, or retained. Without clear oversight, data exposure can occur without being detected.

Expanded and complex access

Access to AI systems is often broader than expected. Developers, analysts, and business users may all interact directly with models through interfaces or integrations. As usage grows, access expands quickly. Without proper control, this creates multiple points where sensitive data can be introduced, accessed, or misused.

Outputs that expose more than intended. AI systems do not just process data. They generate responses. In certain cases, these outputs may include sensitive or proprietary information, especially if the model has been trained on internal datasets or connected to enterprise systems. This creates a new and less obvious form of data leakage.

The Risk of Uncontrolled Model Access

One of the most overlooked aspects of AI security risks is how models are accessed across the organisation.

Unrestricted internal usage

AI tools are often deployed to improve productivity, but access controls may not keep pace. Employees may use shared accounts, integrate models into workflows without oversight, or connect external tools to internal systems. This creates unmanaged exposure. A simple prompt containing sensitive information can introduce risk, even if the intent is harmless.

Shadow AI adoption

Just as shadow IT introduced unmanaged infrastructure, shadow AI introduces unmanaged data risk. Teams may independently adopt AI tools, often using public or third-party models without security review. Sensitive information may be uploaded without understanding how it is processed, stored, or reused. This bypasses governance entirely.

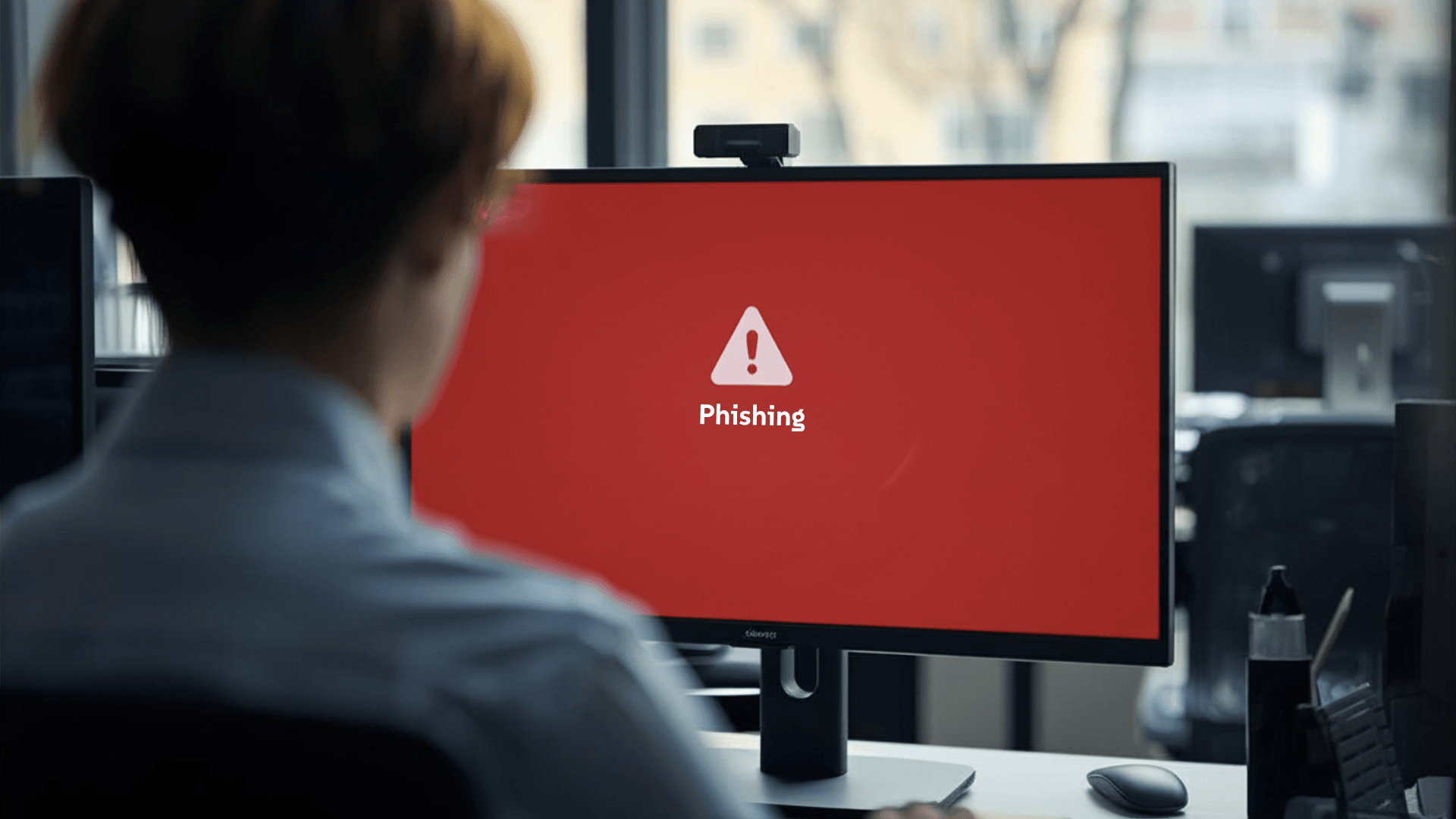

Misuse of legitimate access

Not all risks come from external threats. Users with legitimate access can unintentionally or deliberately expose sensitive information. Examples include:

- Entering confidential data into public AI tools

- Extracting sensitive insights from internal models

- Sharing outputs that contain restricted information

Without monitoring and clear controls, these actions are difficult to detect.

How Data Leakage Happens in AI Environments

Data leakage in AI systems is often indirect. It does not always involve obvious breaches or exfiltration.

Through model inputs

Users may provide sensitive data as input, assuming it will remain private. In some cases, this data may be logged, stored, or used to improve the model. This creates unintended exposure outside the organisation’s control.

Through model outputs

AI models can generate responses that include sensitive information, especially when prompted in specific ways or connected to internal data sources. This risk increases when users do not fully understand what the model has access to.

Through integrations and APIs

AI systems are frequently integrated with internal platforms such as databases, CRM systems, and cloud services. These integrations expand functionality, but also increase the attack surface. If not properly secured, they can provide indirect access to sensitive data.

Why Traditional Security Controls Fall Short

Applying traditional security approaches to AI often leaves critical gaps because they focus on infrastructure rather than usage. To truly address these AI security risks, organisations must look beyond basic network protection and focus on a holistic approach to Large Language Model (LLM) security.

Focus on infrastructure, not usage

Most security controls are designed to protect infrastructure and networks. AI risks often emerge from how systems are used, not just how they are deployed. Without visibility into usage, organisations cannot detect risky behaviour.

Limited context in monitoring

Standard monitoring tools capture access events, but not the intent behind interactions with AI systems. They do not show what data is being input or whether outputs contain sensitive information. This limits effective detection.

Governance lags behind adoption

AI adoption often moves faster than governance. Tools are implemented before policies, controls, and oversight mechanisms are fully established. This creates exposure during the most critical phase of adoption.

How To Reduce AI-Related Security Risks

Managing AI security risks requires extending security practices to cover access, usage, and data flow.

Control and limit model access

Organisations need visibility into how AI systems are used in practice. This includes monitoring:

- Types of data being input

- Frequency and patterns of usage

- Unusual or high-risk interactions

Behavioural monitoring provides early indicators of potential exposure.

Establish clear data policies

Guidelines should define acceptable use cases and prohibit sensitive data in public models. This proactive stance helps maintain data privacy compliance as the regulatory landscape evolves.

- Prohibiting sensitive data in public models

- Defining acceptable use cases

- Setting retention and storage rules

Policies reduce ambiguity and guide consistent behaviour.

Secure integrations and data flows

AI integrations should be treated as part of the broader attack surface. This involves:

- Securing APIs and access points

- Encrypting data in transit and at rest

- Limiting data exposure between systems

Strong controls reduce the risk of indirect access.

From Innovation To Controlled Adoption

AI brings significant opportunity, but also introduces risks that are easy to overlook. Model access and data leakage are not edge cases. They are fundamental challenges that come with how AI systems operate.

Organisations that treat AI as just another tool will struggle to manage these risks. Those that recognise its unique security implications will be better positioned to adopt it safely. The goal is not to slow down innovation. It is to ensure that innovation does not create unintended exposure.

If your organisation is adopting AI without clear control over access and data usage, it is time to reassess your approach.

Explore how Zentara helps organisations secure AI adoption with better visibility, controlled access, and protection against data leakage risks.