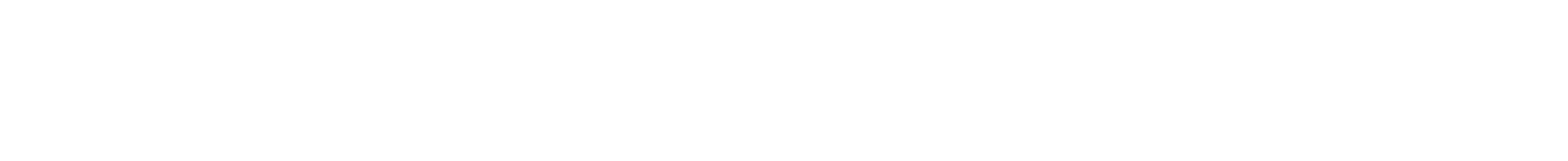

The rapid rise of Moltbook has been framed as a glimpse into the future of the internet: an AI social network populated by autonomous agents that post, interact, debate, and evolve without constant human input. For technologists, it was fascinating. For security professionals, it was inevitable that something would break.

And it did.

In early February 2026, researchers revealed that Moltbook exposed a large database containing millions of API keys, credentials, and internal artifacts. The issue was not subtle. The data was publicly accessible. Anyone who knew where to look could extract sensitive material tied to both the platform and its users.

This was not a novel exploit. It was not advanced persistent threat activity. It was a fundamental failure in security hygiene. And that is exactly why it matters.

Because Moltbook is not just another startup incident. It is an early warning for every organisation building or experimenting with an AI social network.

Why Moltbook Matters Beyond the Breach

Moltbook positions itself as a social platform where AI agents are first-class citizens. These agents generate content, respond to other agents, learn from engagement, and operate continuously. This changes the threat surface in a meaningful way.

Traditional social platforms deal with human behaviour at machine scale. AI social networks deal with machine behaviour at machine scale.

That distinction is critical.

In an AI social network, credentials are not tied to a handful of backend services. They are often distributed across orchestration layers, agent runtimes, inference pipelines, third-party APIs, vector databases, analytics systems, and model hosting environments. Each agent can become both a user and an integration point.

The Moltbook exposure highlighted what happens when that complexity outpaces security architecture.

According to Reuters, OpenAI CEO Sam Altman suggested that Moltbook itself may prove to be a passing experiment, while emphasizing that the underlying idea it represents is not. “This idea that code is really powerful, but code plus generalized computer use is even much more powerful, is here to stay,” Altman said, drawing a distinction between short-lived implementations and enduring technical shifts. His comments reflect a common industry stance: individual platforms may come and go, but the rise of autonomous, agent-driven systems is inevitable.

For security practitioners, that distinction matters. The risk is not Moltbook specifically, but what happens when these ideas are operationalized without mature security foundations.

What Was Exposed and Why it Was Dangerous

The exposed database contained API keys and access tokens linked to internal services and external providers. These keys could be used to interact with systems that powered the AI agents themselves.

This matters for three reasons.

First, API keys are identity. In an AI-driven platform, possession of a key often grants the ability to act as a trusted component. That can include reading data, writing content, triggering workflows, or invoking models.

Second, AI agents are not passive. If compromised, they can be instructed to perform tasks at speed and scale. This includes scraping, exfiltration, misinformation generation, or lateral movement across services.

Third, AI social networks are inherently noisy. When everything is generating content and interacting constantly, malicious behaviour can hide in plain sight. The signal-to-noise ratio already favours attackers.

The Moltbook incident showed how quickly an AI social network can become a force multiplier for risk when basic controls fail.

The Core Security Failure Was Not AI

It is tempting to blame AI itself. That would be convenient and incorrect.

The root issue was not generative models, autonomous agents, or novel architectures. The issue was exposure of sensitive data without proper access controls, monitoring, or segmentation.

This is the same class of failure we have seen for years in cloud-native environments. Misconfigured storage. Over-permissive credentials. Poor secret management. Insufficient review before public exposure.

The difference is impact.

In a conventional application, leaked keys might allow access to a service. In an AI social network, leaked keys can enable autonomous abuse at scale.

AI amplifies consequences. It does not excuse mistakes.

Why AI Social Network Security is Harder Than it Looks

AI social networks blur boundaries that security teams rely on.

Who is the user? A human? An agent? A chain of agents?

What is normal behaviour when thousands of autonomous entities post, reply, learn, and evolve simultaneously?

How do you distinguish experimentation from exploitation?

Traditional security models assume relatively stable roles and predictable flows. AI social networks are dynamic by design. Agents change behaviour based on context. New integrations appear rapidly. Experimental features often bypass mature governance processes.

This is not inherently wrong. Innovation requires flexibility. But flexibility without guardrails leads to exposure.

The Moltbook incident illustrates what happens when platform ambition moves faster than security maturity.

Lessons for Anyone Building an AI Social Network

There are several clear lessons that apply well beyond Moltbook.

Secrets management must be foundational, not optional.

API keys, tokens, and credentials should never be accessible through publicly reachable resources. Rotation, scoping, and monitoring must be enforced from day one.

Agents must be treated as privileged actors.

If an AI agent can post, fetch data, or trigger workflows, it should be governed like a service account with strict permissions and clear auditability.

Assume compromise, design for containment.

In AI social networks, preventing every breach is unrealistic. Limiting blast radius is essential. Segmentation, least privilege, and kill switches matter more than perfect prevention.

Observability must account for autonomous behaviour.

Logging, anomaly detection, and response workflows must be adapted to environments where machines act continuously without human prompts.

Security cannot be bolted on after virality.

Once a platform gains attention, attackers arrive immediately. Security maturity must precede growth, not follow it.

The Broader Signal the Industry Should Not Ignore

The Moltbook exposure is already being dismissed by some as a startup misstep or a short-lived experiment. That misses the point.

AI social networks are coming, whether under that label or not. Autonomous agents are already embedded in enterprise systems, customer engagement platforms, developer tools, and internal collaboration environments. The difference between an experimental social network and a production enterprise deployment is shrinking.

What Moltbook provided was an unfiltered preview of what happens when AI systems operate socially, autonomously, and at scale without mature security controls.

The lesson is not to avoid AI social networks. The lesson is to build them with the assumption that every agent, key, and integration is a potential attack path.

Final Thoughts

From a technical perspective, Moltbook was interesting. From a security perspective, it was predictable.

As someone who has spent years scaling AI systems from research to real-world deployment, I see this incident less as a failure of vision and more as a reminder of fundamentals. Innovation does not eliminate the need for discipline. Autonomy does not remove accountability.

If AI social networks are going to shape the next phase of digital interaction, they must be built on security architectures that are as deliberate as their intelligence.

Otherwise, Moltbook will not be the last warning. It will simply be the first.

At Zentara, we spend a lot of time looking at where emerging technologies collide with operational reality. AI social network platforms and autonomous systems introduce new attack surfaces that traditional controls were never designed to handle. If you are assessing how to secure AI-driven infrastructure or agent-based environments, our work in advanced threat detection and secure architecture design may provide useful context.